Introduction

Autonomous AI agents demand more than reactive command execution—they must seamlessly integrate complex decision-making, dynamic external tool invocation, and persistent memory management to operate reliably and effectively in diverse, constantly evolving environments. Achieving this requires a finely tuned balance of modular architectures that separate reasoning, memory, and API integration layers. Such modularity reduces code complexity, enables independent scalability, and enhances fault isolation, all critical for mission-critical deployments.

The engineering challenge extends beyond enabling agents to plan and act autonomously; it also involves robustly handling real-world failure modes—such as inconsistent internal state, API rate limits, network unreliability, and unpredictable tool behavior—that arise in distributed and asynchronous settings. For software architects and engineers developing autonomous AI agents, grasping the trade-offs between symbolic and large language model (LLM)-driven reasoning, managing long-term context, securing and supervising external calls, and incorporating human oversight is essential to delivering scalable, maintainable systems. This article delineates these core architectural decisions and practical implementation strategies to guide engineering teams in building autonomous AI agents that transcend simple command-driven patterns to thrive amidst operational complexity.

Defining Autonomous AI Agents and Core Concepts

Definition and Characteristics of Autonomous AI Agents

Autonomous AI agents extend far beyond reactive programs or simple scripted bots. They are software entities capable of continuous environment interaction, independent decision-making, and execution of complex, multi-step workflows without persistent human intervention. These agents maintain sustained autonomy over extended time horizons, adapting dynamically in response to evolving conditions and inputs.

Architecturally, robust autonomous agents embody distinct yet tightly coupled modules responsible for perception, internal state management, planning, and action execution. The perception module acts as a sensor layer, ingesting raw signals from APIs, sensors, databases, or message streams, then transforming them into coherent environmental representations. This continuous sensing capability forms a foundational prerequisite for meaningful autonomy. Importantly, this modularization embraces established software engineering principles such as separation of concerns, enhancing testability, maintainability, and scalability, as discussed in Martin Fowler’s architecture patterns.

Fundamental to autonomy is continuous, iterative decision-making rather than one-off, fixed rule executions. Autonomous agents consistently re-assess their internal state and environmental inputs to select the next optimal action. For example, a backend monitoring agent might dynamically triage alerts by factoring in current system load, recent incident history, and mitigation effectiveness, adjusting priorities in near real-time. This flexibility differentiates genuine autonomy from hardcoded scripts.

Environment interaction is bidirectional: the agent actively modifies external system state via API calls, database transactions, or actuator commands. These side effects are contextually governed by the agent’s goals, ensuring coherence between internal plans and external outcomes. For instance, a data pipeline orchestrator agent might trigger ETL jobs, monitor their execution, and adjust schedules accordingly, synchronizing internal task state with distributed workflow progress.

A hallmark attribute is adaptivity—agents iteratively refine behavior based on aggregated outcomes and unexpected environmental changes. If a service endpoint consistently underperforms, an agent learns to reroute requests or adjust timing heuristics without manual reprogramming. This adaption often leverages persistent state management, feedback loops, and reinforcement learning or heuristics updates to improve robustness and efficiency across task executions.

Contrasting autonomous agents with naive AI scripts reveals critical architectural nuances. Simple bots often exhibit brittleness—rigid pipelines and stateless designs hinder error recovery and adaptability. Autonomous agents instead incorporate memory to track task progress, monitor failure modes, and trigger recovery actions, supporting extended, multi-step task horizons and evolving goal conditions.

- Perception Module: Ingests and processes environmental data streams continuously.

- Planning & Reasoning Module: Maintains internal state and generates adaptive execution plans.

- Action Module: Executes discrete commands that affect environment or agent state.

This separation promotes flexible evolution and maintenance, mitigating anti-patterns like monolithic codebases or opaque decision logic.

Realistic engineering contexts illustrate these principles. Consider autonomous backend agents managing cloud infrastructure health: they ingest telemetry, maintain runtime state, and dynamically adjust resource allocation workflows, integrating alerting and remediation with minimal human intervention. Such agents must cope with incomplete sensor data, noisy inputs, and API latency, underscoring the need for persistent memory and adaptive reasoning to achieve resilience.

Ultimately, engineering autonomous AI agents demands well-considered system decomposition that ensures continuous perception, decision cycles, and adaptive behavior over extended temporal spans. This foundation enables deeper examination of core autonomous decision-making and reasoning mechanisms.

Mechanisms of Autonomous Decision-Making and Reasoning

Autonomous decision-making unfolds as an iterative cognitive workflow within agents, converting environmental inputs into goal-directed actions through integrated perception, memory updates, reasoning, and execution loops. These tightly coupled stages form the cognitive core enabling agents to plan and adapt dynamically.

At the heart lies the reasoning engine, which governs whether agents can effectively plan, re-plan, and adapt actions in response to changing conditions. Historically, symbolic inference systems dominated this space—rule-based logic engines or planners leveraging explicit knowledge representations to traverse decision spaces predictably and auditable. Such symbolic reasoning excels in safety-critical contexts demanding transparency, determinism, and comprehensive traceability, e.g., aerospace control or medical devices, where every inference must be validated. Detailed explorations appear in symbolic AI for safety-critical systems (illustrative link). Symbolic systems encode domain knowledge as logical predicates, constraints, and heuristics, making explicit the cause-effect relations driving decisions.

However, symbolic reasoning is limited by brittleness in complex, ambiguous, or evolving environments requiring extensive manual rule crafting and often failing to generalize well under uncertainty or incomplete information. This sharply contrasts with the rise of large language models (LLMs), which provide flexible, context-aware reasoning capabilities. LLMs serve as generalized knowledge engines that generate adaptive, multi-step action plans from high-level natural language prompts without explicit rule enumeration. Within agents, LLMs parse user goals, infer requisite external tool calls (APIs, scripts), and compose action sequences dynamically, offering creative problem-solving but at the cost of probabilistic outputs and reduced interpretability.

Architecturally, LLM-powered agents tightly couple large language models with persistent memories and tool invocation layers. Memory modules store intermediate reasoning states, prior execution results, and environment snapshots, enabling the agent to recall context, avoid redundant operations, and iteratively refine goals. Tool invocation acts as a dynamic bridge by which agents call external services based on reasoning outcomes, embedding procedural capabilities directly within cognitive flows. Comprehensive discussions of LLM and API integration include Microsoft Copilot documentation.

Balancing symbolic and LLM-driven methods requires navigating known trade-offs:

- Interpretability vs. Flexibility: Symbolic planners expose human-readable logic suitable for audit and compliance, yet impose brittle, often expensive rule engineering. LLMs adapt flexibly but produce probabilistic, non-transparent outputs that complicate debugging.

- Deterministic vs. Probabilistic Behavior: Symbolic systems deliver repeatable, verifiable results; LLMs yield variable outputs influenced by training and prompt engineering, complicating consistency guarantees.

- Knowledge Engineering vs. Pretrained Generalization: Symbolic agents rely on handcrafted ontologies and rules, whereas LLMs leverage vast pretrained corpora enabling broad domain generalization but sometimes hallucinate or err.

Pragmatic autonomous AI designs increasingly adopt hybrid architectures combining symbolic logic for safety-critical constraints and verification with LLM-driven flexibility. For example, financial advisory agents might use symbolic logic to enforce compliance guardrails while utilizing LLM planning to generate personalized investment strategies. This hybridization bolsters robustness while preserving adaptability, as discussed in How Autonomous AI Agents Become Secure by Design — NVIDIA Blog. Interfacing symbolic and neural components requires careful encoding of symbolic predicates into LLM prompt contexts and interpreting probabilistic outputs back into logical assertions without semantic loss. Further exploration is available in Automated Planning and Scheduling.

Within an agent’s execution loop, the reasoning engine consults memory, integrates environmental feedback, and issues commands or API calls accordingly. For instance, an LLM-based customer support agent might parse a complex inquiry, plan multi-step resolutions involving knowledge base lookups and ticket updates, then adapt dynamically based on real-time user reactions.

Case studies highlight the power of these mechanisms. A logistics company deploying an LLM-powered dispatch agent observed a 15% reduction in delivery times and 10% fuel savings through iterative route optimization and dynamic traffic re-routing informed by live API data and persistent memory. Meanwhile, autonomous vehicles couple hierarchical symbolic planners for safety-critical control with LLM-driven perception to balance predictability and operational flexibility, illustrating hybrid paradigm synergy.

Robust autonomous AI agents thus rest on intricately architected reasoning systems that integrate symbolic clarity and LLM adaptability. Architecting these cognitive workflows underpins genuine autonomy, enabling machines to handle extended, complex tasks with minimal human supervision. With reasoning mechanisms explored, we next examine the critical dimension of tool and external API integration that expands agents’ capabilities.

Integrating Tools and External APIs for Expanded Functionality

Autonomous AI agents realize their full potential not solely from internal reasoning but through dynamic access to external APIs and automation tools. The engineering imperative is to build secure, modular integration layers that enable agents to retrieve data, execute domain-specific actions, and orchestrate complex external services reliably—without compromising system integrity or scalability.

Central to integration are robust authentication and credential management strategies. Tokens—often OAuth2 or JWT-based—must be protected through secure storage, transparent rotation, and automatic refresh protocols to minimize agent downtime. Implementing role-based access control (RBAC) effectively scopes API privileges to least-necessary permissions, critical for limiting risks from token compromise or privilege escalation. Runtime sandboxing of API calls within isolated environments further contains potential damage caused by misbehaving dependencies or transient network failures. Zero-trust architectures, exemplified by Google’s BeyondCorp, validate each API invocation dynamically to enforce security boundaries, an approach increasingly adopted in AI agent production systems. For OAuth2 best practices, see the OAuth 2.0 Authorization Framework RFC.

Real-world APIs exhibit behaviors requiring sophisticated resilience patterns: rate limits, transient failures, network unreliability, and inconsistent schema changes frequently disrupt workflows. Engineering solutions include circuit breakers to detect repeated failures and temporarily halt or reroute requests, preventing cascading outages. Exponential backoff with jitter mitigates retry storms during peak load. Fallback mechanisms—such as cached results or alternate service endpoints—improve robustness. Handling asynchronous or streaming API responses demands event-driven or callback paradigms; for example, reacting to webhook notifications avoids inefficient polling loops, reducing latency and resource use.

Architectural choices for tool invocation impact concurrency, fault tolerance, and responsiveness. Synchronous API calls simplify programming models but risk blocking agents on slow or failing services. Event-driven workflows enable concurrency and efficient resource utilization by decoupling request issuance from result handling, supporting parallelism and better fault isolation. Hybrid approaches often use message queues or distributed task schedulers (e.g., Kafka, Celery) to manage tool requests asynchronously, enhancing scalability and resilience.

A fundamental design principle is loosely coupling tool interfaces from agent core logic. Adapter patterns or standardized API wrappers abstract away external service peculiarities, isolating the agent from schema, authentication, or version changes that occur frequently. Declarative interface descriptors—such as OpenAPI or JSON schema—enable automated validation and client generation, reducing maintenance burden. Frameworks like LangChain embody this modular, extensible approach by providing flexible connectors across providers, facilitating rapid tool swaps without core rewrites.

Illustrative cases demonstrate these principles. Autonomous customer service agents integrating with multiple external systems—ticketing, CRM, payroll—use modular wrappers plus exponentially backed-off retries to maintain availability. Such design led to a 30% reduction in manual handoffs and a 15% boost in customer satisfaction, with architecture that gracefully degraded to read-only or delayed retries during service outages, maintaining service-level agreements.

In essence, the seamless fusion of internal reasoning and secure, resilient tool integrations empowers autonomous AI agents to scale practical utility while sustaining stateful operations and long-horizon task management—a critical foundation for robust autonomy. With tools integrated, addressing memory retention and consistent state management becomes paramount to agent effectiveness and reliability.

Memory Retention and State Management Across Tasks

Memory systems underpin autonomous AI agents’ ability to transcend isolated command executions, enabling coherent, contextually aware, multi-step workflows across sessions and distributed deployments. Engineering these memory architectures rigorously ensures agents maintain situational awareness and fuse episodic knowledge seamlessly.

Memory manifests along a spectrum from short-term caches—ephemeral state relevant to current reasoning cycles—to long-term persistent stores that archive historical contexts and episodic records. Short-term caches often employ in-memory structures (hash maps, Redis) for low-latency access, suitable for tracking dialogue states, recent API results, or dependencies between subtasks. Long-term memories rely on durable stores—NoSQL databases (e.g., MongoDB, Cassandra), relational databases, or vector embedding stores optimized for semantic retrieval. Embedding databases (Pinecone, Weaviate) are invaluable for semantic nearest-neighbor searches to recall contextually relevant prior interactions, essential for agent recall and task generalization.

Maintaining state consistency across distributed components and sessions is a complex engineering challenge. Horizontal scaling or serverless deployments require synchronized shared state, preventing stale or conflicting writes. Strategies include centralizing state in strongly consistent stores or embracing eventual consistency with conflict resolution policies (e.g., CRDTs). Partial failures—network partitions or crashes—demand transactional writes, idempotent updates, and checkpointing to prevent data loss or corruption. For example, a multistep financial analytics agent integrating Redis streams with periodic durable snapshots enables recovery from mid-task failures without losing context; see Redis Streams documentation for architectural details.

Efficient memory access involves indexing for fast retrieval, error detection via checksums or schema validation to prevent corruption, and incremental saves to reduce IO overhead and inconsistent read windows. Engineers balance persistence granularity critically; overly frequent writes incur latency and concurrency costs, while stale state risks incoherent reasoning. Designing memory APIs supporting isolation, atomic updates, and partial rollbacks improves robustness.

Incorporating human-in-the-loop AI within memory management elevates trustworthiness and error recovery. Agents surface checkpoints or critical decision points for manual review or annotation, enabling corrections without stalling autonomous progress. For instance, AI coding assistants log intermediate contexts while allowing developers to insert new instructions or adjust goals dynamically, supporting cooperative workflows in high-stakes or safety-critical domains.

Robust memory architecture profoundly impacts agent performance by enabling coherent task progression that respects historic contexts, facilitates learning patterns, and prevents redundant information requests or contradictory behavior. Absent solid memory, agents degrade into repetitive, fragmented interactions with poor user experience and operational inefficiency.

Common pitfalls include brittle memory schemas tightly coupled to specific workflows that resist extensibility, information overload causing retrieval latency spikes and confusing reasoning, and context drift, where outdated or conflicting updates cause memory divergence. Mitigation includes pruning policies, relevance decay scoring, and anomaly detection to surface divergent states proactively.

Concrete examples include digital health assistants employing multi-tiered memory models—Redis caches for immediate symptom tracking, relational stores for patient histories, and embedding databases for medical literature context—achieving 25% improved diagnostic accuracy and 40% faster response over stateless designs.

Ultimately, well-engineered memory retention converts autonomous agents from ad-hoc responders into truly persistent, evolving problem solvers capable of anticipating and reasoning through complex plans, preparing groundwork for the next phase: planning frameworks that realize these plans in execution.

Planning Frameworks for Autonomous Execution

Planning frameworks transform high-level goals into structured, executable subtasks, enabling autonomous agents to sustain operation over extended horizons. Designing planning systems requires navigating complexity, uncertainty, and time-sensitive constraints prevalent in real-world deployments.

Common architectures leverage hierarchical task network (HTN) planners, rule-based engines, or heuristic search algorithms to recursively decompose objectives. HTNs arrange tasks into trees where leaves correspond to primitive, executable actions or tool invocations, enabling modularity and recursive refinement. Rule engines impose domain-specific logic and constraints, balancing predictability with flexibility. Heuristic search approaches (A*, greedy best-first) dynamically navigate task spaces shaped by custom cost or reward functions, allowing real-time replanning based on environmental feedback. For foundational context, see Overview of HTN Planning by Stanford University.

Advanced agent stacks incorporate formulations such as Markov decision processes (MDPs), Monte Carlo tree search (MCTS), and reinforcement learning (RL)-guided planners. MDPs provide a principled framework for sequential decision-making under uncertainty, optimizing expected cumulative rewards but pose combinatorial scalability challenges that demand state abstraction techniques. MCTS balances exploration and exploitation efficiently by simulating future action sequences, guiding tree traversal toward promising branches—central to game-playing AI successes. RL-based planners derive policies directly from interaction data, facilitating adaptive, experience-driven behavior, albeit requiring extensive training data and slower convergence.

Crucially, planners must balance exploration versus exploitation—trading off leveraging known effective strategies against testing novel actions that might improve outcomes. Techniques such as epsilon-greedy selection, Upper Confidence Bound (UCB), or Thompson sampling dynamically adjust exploration rates in response to uncertainty and accumulated experience. Imbalanced exploration risks performance stagnation or wasted resources. Engineers often augment core planners with meta-planners or adaptive modules that tune these strategies at runtime.

Robust planning accommodates dynamic workflow modeling that supports replanning triggered by new observations, delayed effects, or error conditions. Checkpointing agent states before risky steps enables rollback and backtracking if downstream assumptions prove invalid. Planning under partial observability necessitates probabilistic belief state estimation or contingency planning, enriching workflows with fallback options. Integrating these capabilities demands low-latency coordination between planners and tool invocation layers, preserving agent responsiveness in complex operational contexts.

Technical hurdles include managing state-space explosion, particularly when goals decompose combinatorially or uncertainties multiply. Engineers employ abstraction hierarchies, sampling-based search, or bounded-horizon planning to manage complexity, often prioritizing tractable approximations over exact optimality. Planning with incomplete or noisy information requires explicit information-gathering actions embedded within workflows, adding another layer of intricacy. Tight real-time constraints further drive the adoption of anytime algorithms that refine partial plans continuously.

Planning frameworks constitute a living aspect of autonomous AI agent development, tightly coupled with continuous integration cycles. Monitoring planning efficacy, capturing execution outcomes, and logging failure modes generate data that feed iterative improvements. Online learning or heuristic updates adjust behavior in production, while simulation environments validate new strategies pre-deployment. This DevOps-informed feedback loop ensures planning systems evolve alongside changing operational demands.

An applied example is a large-scale logistics coordination agent deploying MCTS combined with hierarchical task decomposition to autonomously schedule pickups, optimize routes, and manage inventory. Dynamic replanning responded to traffic jams and inventory shortages, increasing on-time deliveries by 20% and halving manual dispatcher workloads. Integration with external APIs for GPS, weather, and inventory continuous updates exemplified harmonious planning and tool invocation.

In summary, sophisticated planning frameworks elevate autonomous AI agents from reactive entities to proactive strategists, capable of decomposing, scheduling, and adapting complex workflows. Combined with persistent memory and secure tool integration, planning forms a central pillar enabling truly independent and scalable task fulfillment.

Trade-offs Between Symbolic and LLM-Driven Reasoning

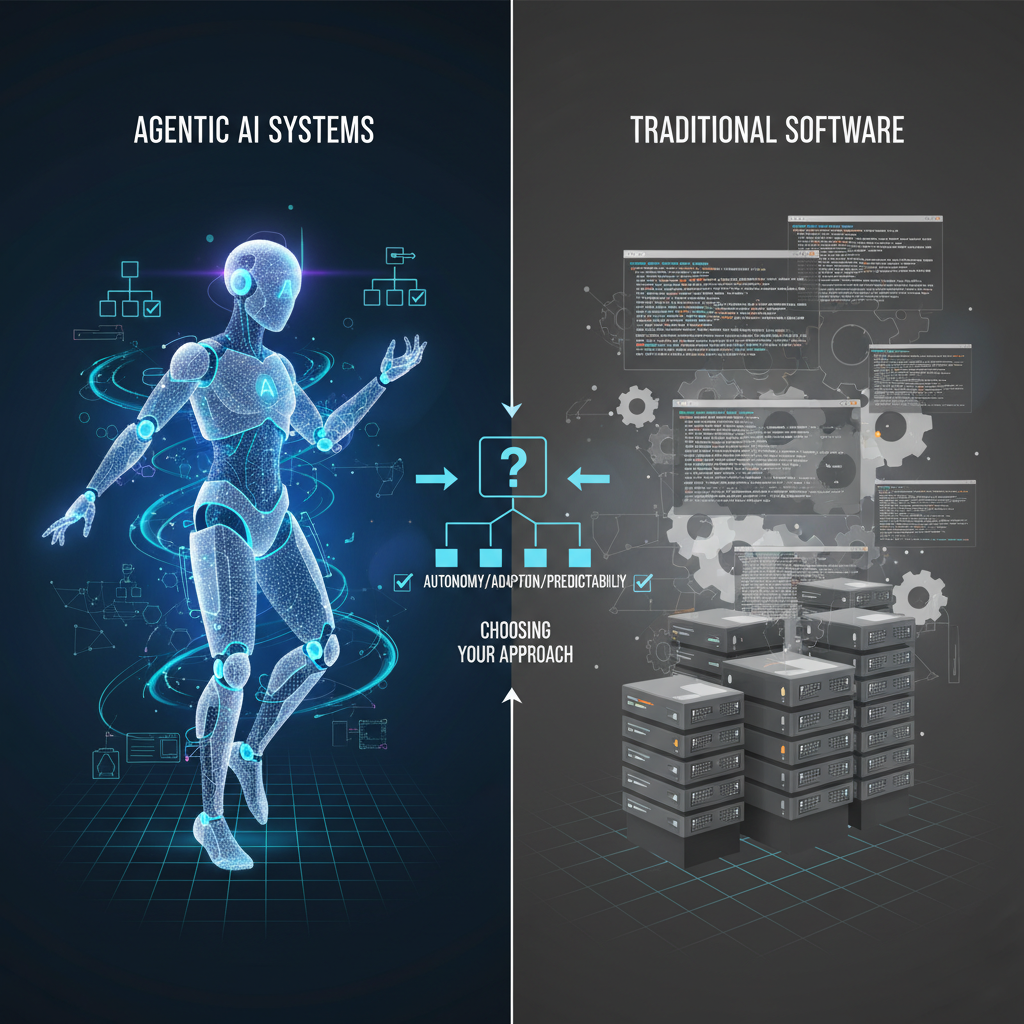

A foundational architectural decision in autonomous AI design concerns choosing between symbolic and LLM-driven reasoning paradigms, each embodying distinct trade-offs impacting system reliability, interpretability, and adaptability.

Symbolic reasoning systems utilize explicit logic rules, knowledge graphs, and classical planning algorithms to derive decisions. This approach delivers strong determinism and explainability: every inference step follows clear, rule-defined pathways, facilitating debugging and formal verification. For example, agents applying classical planners generate verifiable action sequences with provable correctness under specified conditions—vital for regulatory compliance or safety-critical applications. However, upfront knowledge engineering demands heavy domain expertise and extensive modeling efforts. Maintaining symbolic rule bases in face of shifting requirements or open environments proves costly and brittle, with poor generalization beyond their initial scope.

Conversely, LLM-driven agents leverage transformer-based architectures trained on vast textual corpora to perform flexible, data-driven reasoning. They excel at interpreting diverse inputs, inferring implicit context, and dynamically integrating heterogeneous toolsets. However, LLM outputs are inherently probabilistic and non-deterministic, complicating reproducibility and traceability given reliance on implicit learned representations rather than explicit rules. Debugging these agents demands novel tooling—prompt engineering diagnostics, output correlation logging—but transparency remains limited. Moreover, LLM-powered agents occasionally hallucinate tool calls or generate malformed data lacking clear error signals, impacting robustness.

This fundamental tension motivates hybrid reasoning architectures combining symbolic planners as safety and verifiability anchors with LLM components providing fluid natural language understanding and dynamic tool selection. Symbolic reasoning ensures robust task decomposition, control flow enforcement, and constraint satisfaction, while LLMs handle nuanced interpretation, knowledge augmentation, and flexible API invocation. For instance, an agent may generate high-level, verifiable plans symbolically, then employ LLMs to formulate API queries or summarize intermediate states. The symbolic layer thus constrains LLM uncertainty, reducing error propagation. Architecting effective intermodule communication requires defining interfaces that map symbolic predicates into LLM contexts and interpreting probabilistic outputs back into symbolic form, preserving semantic fidelity. For further technical foundations, consult Automated Planning and Scheduling.

Practical implementations illustrate symbolic fallbacks mitigating LLM risk. Enterprise automation platforms verify LLM-suggested tool invocations symbolically against schemas before execution, preventing invalid calls prone to causing cascading failures. This trades some flexibility for enhanced robustness—crucial where erroneous autonomous actions could generate costly disruptions. Balancing symbolic rigor with LLM agility remains a nuanced engineering exercise demanding domain expertise and continuous refinement.

In sum, selecting among symbolic, LLM, or hybrid reasoning frameworks involves measurable trade-offs between execution transparency, fault tolerance, and adaptive breadth—decisions foundational to building dependable autonomous AI agents capable of complex, tool-augmented workflows.

Managing Real-World Failures: API Limits and Tool Unpredictability

Deploying autonomous AI agents in production mandates robust engineering to handle an array of failure modes endemic to external API and third-party tool integration. Without resilient failure management, agents risk cascading errors, inconsistent state, and degraded user experiences that erode trust in automation tools.

Common failure modes include API rate limiting, where providers cap request frequencies, abruptly interrupting workflows dependent on timely data or operations. Network timeouts and transient connection losses inject uncertainty about whether prior calls succeeded, complicating internal state synchronization. Unplanned service outages and schema changes cause malformed responses that trigger errors or corrupt agent memory. Proactive anticipation, detection, and graceful handling of these faults are prerequisites for production viability.

Effective failure management centers on retry and backoff strategies engineered to recover from transient issues without exacerbating failures. Exponential backoff with random jitter spaces retries to prevent synchronized retry storms, which worsen service strain. Combined with circuit breaker patterns, repeated failures trigger temporary halts to external calls, shielding downstream systems. A crucial design facet is preserving internal state consistency across retries—idempotent APIs or compensating transactions ensure side effects occur only once despite repeated invocation attempts. Guidance appears in Microsoft Azure Retry Patterns.

Fallback strategies enhance resilience by substituting secondary endpoints or employing degraded operational modes when primary tools fail. For example, a customer support agent might default to cached knowledge summaries or flag issues for human review when authoritative data sources become unreachable, preserving core functionality under adversity.

Unmitigated tool failures cascade upward, risking plan integrity and memory consistency. Malformed API responses may feed corrupted data into agent memories, resulting in incoherent reasoning and flawed command sequences. Explicit error signaling at tool integration boundaries is critical—capturing error metadata (type, timestamps, payload) and propagating errors through reasoning flows enables targeted recovery or human notification, avoiding silent failures.

Comprehensive observability forms the backbone of failure diagnosis and robustness improvements. Instrumenting APIs with logging, monitoring, and tracing captures latency, error rates, data volumes, and performance anomalies. End-to-end tracing—from user inputs through tool invocations to output generation—facilitates root-cause analysis and continuous system refinement.

Security complements fault tolerance by enforcing rigorous input validation and output sanitization, preventing injection attacks, corrupted states, or privilege escalations through tool layers. These safeguards are especially salient in regulated or sensitive domains.

In sum, operational reliability of autonomous AI hinges on systemic fault tolerance around external APIs and tools—reliable retries, fallback paths, error containment, and observability collectively sustain long-running workflows and consistent state, cementing user trust in autonomous automation.

Challenges with Memory Consistency and State Synchronization

Maintaining consistent internal memory and synchronized shared state is foundational for autonomous AI agents executing extended, multi-step reasoning workflows across distributed or concurrent environments. Yet, memory management often represents a critical yet underappreciated source of fragility and bottlenecks undermining reliability and decision correctness.

A key failure mode arises from memory inconsistencies introducing stale, conflicting, or incomplete data into the reasoning pipeline. Asynchronous processing can cause out-of-order or partial memory updates without rollback, corrupting states and propagating faulty assumptions. For instance, an LLM-powered agent referencing outdated user preferences or mismatched prior tool outputs may produce incoherent or invalid command sequences.

Preventing such inconsistencies entails implementing memory validation and reconciliation protocols. Techniques like checksums or hash-based integrity verification detect corruption early. Employing versioning schemes to track updates enables conflict detection through version mismatches, supporting strategies such as last-write-wins or merge functions balancing consistency and responsiveness. Error-prone environments may favor eventual consistency with periodic reconciliation, while latency-sensitive contexts might require strong consistency enforced via locking.

Distributed or concurrent agent architectures necessitate synchronization mechanisms. Coarse locking can guarantee exclusive access but risks contention and throughput degradation. Alternatively, event sourcing records immutable logs of state changes, reconstructing consistent views by replay. Strategically employing Conflict-Free Replicated Data Types (CRDTs) supports deterministic merges across replicas, tolerating network partitions and concurrent writes—a powerful model for replicated agent state synchronization.

Incorporating human-in-the-loop checkpoints supplements automated reconciliation by enabling manual verification of ambiguous or conflicted states before committing critical decisions. For example, financial auditing agents flag inconsistent transaction records for human review, avoiding costly mistakes. Such hybrid oversight acknowledges current AI limitations and safeguards high-stakes operations.

Memory lifecycle management addresses data growth and performance degradation from accumulating history. Adaptive garbage collection and pruning policies discard outdated or irrelevant contexts, balancing resource constraints with task continuity. Agents may selectively compress or archive portions of long-term memory, mitigating latency spikes while retaining essential knowledge.

Memory integrity directly influences planning and execution fidelity. Obsolete or inconsistent memories cause plan failures, incorrect tool invocations, and execution errors. Integrating memory consistency metrics and anomaly detection into monitoring dashboards provides early warning signals, enabling proactive maintenance before system-wide impact.

A large-scale automation pipeline integrating multiple LLM agents for customer onboarding employed a hybrid memory model using event sourcing plus version control. This design lowered inconsistent state errors by 40%, preserving cross-agent synchronization in parallel workflows. Human interventions resolved edge-case conflicts, ensuring compliance and reducing onboarding time by 20%, demonstrating measurable benefits of rigorous memory management.

In conclusion, deliberate engineering of memory consistency and synchronization mechanisms constitutes a cornerstone of reliable autonomous AI agents. Selecting appropriate state models, embedding validation, optionally involving humans, and governing memory lifecycle policies empower scalable, fault-tolerant multi-step automation frameworks.

Together with failure management and reasoning architectures, mastering memory is critical to realizing robust autonomous AI agents capable of operating dependably at scale.

Operational Considerations and Best Practices for Building Autonomous AI Agents

Engineering autonomous AI agents that integrate sophisticated reasoning, tool use, and memory retention mandates an operational mindset centered on pragmatic reliability, security, observability, and scalability. These systems function in complex, dynamic contexts, demanding architecture and engineering patterns that address tooling intricacies, durable state management, fault tolerance, and trustworthiness.

Core to production readiness is modular design that decouples reasoning logic, state storage, and tool execution. Independent scaling of reasoning compute, persistent memory layers, and API integration not only optimizes resource use but also contains failures—API latency spikes should not stall reasoning, nor should memory inconsistencies cascade to corrupt decision processes. This modularity fosters resilience and maintainability in evolving environments.

Security, trust, and governance form foundational pillars. Autonomous agents interact extensively with potentially sensitive external systems and data, necessitating strict access controls, authentication best practices, input and output validation, auditing, and fail-safe policies. Such measures prevent exploitation or unintended behaviors, anchoring agent actions within defined operational boundaries.

Complementing autonomy, observability and human oversight facilitate transparency and control. Fine-grained telemetry—including API metrics, reasoning trace logs, memory snapshots, and heuristic signals—provides actionable visibility into agent health and behavior. Embedding human checkpoints at risk critical junctures allows error correction, overrides, and trust-building, reconciling automation efficiency with safety requirements and enabling iterative system refinement.

The following sub-sections detail key operational domains: security and trust, scalability and fault isolation, and human oversight and monitoring—each critical for delivering resilient, production-grade autonomous AI agents.

Security and Trust in Autonomous AI Systems

Security in autonomous AI systems transcends traditional data protection; it anchors trustworthiness and operational stability for agents wielding broad privileges, including external tool invocation and persistent memory manipulation. Careful API security design is imperative.

Token-based authentication paired with role-based access control (RBAC) constitutes best practice. OAuth2 bearer tokens or JWTs facilitate fine-grained, scoped API access preventing excessive privileges. For example, an agent only orchestrating data reads should never possess write permissions on sensitive user records. Least-privilege enforcement significantly reduces attack surfaces and privilege escalation risks.

Permission scopes segment agent capabilities along fine operational boundaries—differentiating read, create, update, and delete authorities—and segregate tool access by agent identity or operational phase. Complementing these controls with cryptographic measures—TLS for channel encryption, request signing for integrity verification—ensures secure, tamper-resistant communication even in adversarial networks.

Within-system trust boundaries isolate risky operations, using sandboxing, interface contracts, and runtime guards. Segregating the reasoning engine from API invocation layers facilitates quick isolation upon anomaly detection, mitigating fault propagation.

Robust audit logging tracks API calls with parameters, decision rationales, and state transitions, supporting forensic analysis, regulatory compliance, and explainability audits. This is non-negotiable in regulated industries such as finance and healthcare.

Fail-safe mechanisms—including circuit breakers, throttling, and automatic rollback—halt or reverse suspicious agent actions based on metrics or policy violations. For example, elevated API failure rates or semantic output anomalies trigger flow throttling, avoiding data corruption and service cascade.

Common pitfalls include reliance on third-party API responses without validation, exposing agents to injection or data corruption attacks. Overprivileged tokens invite lateral movement and system compromise. Implicit trust in tool outputs breeds brittleness; rigorous schema and semantic validation guardrails are mandatory.

A case study in financial AI automation showed that retrofitting token-scoped access controls reduced API misuse by over 70% and audit logging plus circuit breakers cut incident response time by 35%, yielding multimillion-dollar annual compliance savings.

Ultimately, layered defense-in-depth combining authentication, minimal privilege, validation, fault isolation, and monitoring fosters operational trust without impairing agent agility. For more, consult Microsoft secure API design.

Designing for Scalability and Fault Isolation

Scalability and fault tolerance emerge from architectural modularity and decoupling within autonomous AI agents. Monolithic designs impede scaling and inhibit failure containment, while loosely coupled services enable independent resource allocation and graceful degradation.

Separating compute-heavy reasoning engines (e.g., large LLM inference or symbolic planning) from stateful memory stores and from I/O-bound API/tool integration layers enables elastic scaling tailored to workload characteristics. Reasoning components scale horizontally across GPU clusters or CPU pools for concurrency; memory backends prioritize consistency and latency; API layers manage latency and transient failure handling.

Such modularity facilitates fault isolation. Circuit breakers in API modules prevent cascading slowdowns from external service outages. Replicated or cached memory stores reduce single points of failure, enabling fast state recovery. Graceful degradation strategies ensure reasoning engines continue operating with stale or partial data if necessary, preserving progress.

An AI agent orchestration framework from a global enterprise exemplifies this: planning modules, API adapters, and distributed state stores operated independently. An external document ingestion API outage triggered circuit breakers that preserved scheduler throughput, isolating failures. Replicated multi-node key-value stores supported lost write recovery, maintaining reasoning continuity.

These structural choices underpin high availability SLAs and low latency, delivering near-continuous operation and resilient responsiveness despite inherent API unpredictability. Horizontal scaling accommodates workload growth and simultaneous agent proliferation—key to enterprise-grade deployment.

In summary, architectural modularity, fault isolation, and targeted scaling strategies transform autonomous AI agents from prototypes to production-grade resilient systems. See AWS autonomous agents architecture for industrial insights.

Incorporating Human Oversight and Continuous Monitoring

Despite aspirations toward full autonomy, incorporating human-in-the-loop controls and comprehensive monitoring remains essential to safe, reliable AI agent deployment. This integration mediates automated efficiency with human judgment, supporting risk mitigation and trust.

Architectural patterns embed explicit validation checkpoints within workflows, requiring human review before high-impact actions like contract finalizations, financial transactions, or infrastructure changes. Agents present explanations, proposed steps, and contextual metadata to reviewers for informed intervention. Escalation and rollback mechanisms support safe error correction without disrupting operations.

Continuous monitoring frameworks capture comprehensive telemetry: real-time API success rates and latencies, memory consistency metrics, planning confidence scores, and heuristic validation outputs contribute to holistic visibility. Aggregated dashboards with drill-down analytics empower engineering and operational teams with actionable intelligence.

Automated anomaly detection layers identify deviations from baselines—sudden API error surges, memory leaks, or decision-pattern irregularities—triggering alerts or automated containment actions like throttling or agent rollback. This instrumentation supports operational stability and security incident response.

Careful design ensures human oversight and monitoring augment rather than hamper agent throughput. Asynchronous pipelines prevent bottlenecks; prioritization heuristics streamline human approval cycles to minimize latency impact.

Notable examples include healthcare diagnostic AI systems that mandate clinician sign-off on flagged cases exhibiting low confidence or novel symptom patterns. Continuous monitoring detected subtle shifts post model updates, supporting rapid remediation. Over extended deployment, this approach preserved diagnostic accuracy while offloading routine cases autonomously.

Embedding human-in-the-loop models as an integral part of AI agent workflows institutionalizes iterative learning, error correction, and incremental trust—cornerstones on the path to robust autonomy at scale.

Together, security, scalability, and human oversight define a foundational operational triad underlying dependable autonomous AI agents that integrate reasoning, tooling, and memory to solve real-world problems reliably.

Applications and Use Cases of Autonomous AI Agents

Industry Applications Benefiting from Autonomous AI Agents

Autonomous AI agents power transformative workflows across engineering and operational domains by orchestrating multi-step, context-aware tasks involving planning, tool use, and persistent memory, far beyond reactionary scripts.

In backend services, agents autonomously monitor telemetry, diagnose faults, and enact remediation workflows—balancing rapid incident response with resource constraints and avoiding alert fatigue. Persistent memory structures capture historical fault data and action efficacy, enabling adaptive strategies over time. Tool integration spans cloud APIs, infrastructure orchestration platforms, and alerting systems, requiring secure, reliable external interaction. These agents navigate partial visibility, noisy inputs, and concurrency, highlighting engineering challenges in memory consistency and failure handling.

Within data pipelines, autonomous agents coordinate extraction, transformation, and loading workflows by dynamically adapting job schedules based on data freshness, task dependencies, and resource availability. Integrations with distributed message buses, databases, and monitoring APIs necessitate resilient, asynchronous tool interaction and checkpointed state management. Agents adjust plans on task failures or delays, enabling progressive recovery without manual intervention—critical to data reliability in enterprise ecosystems.

API federation scenarios employ autonomous agents to mediate calls across heterogeneous services with varying SLAs, rate limits, and authentication schemes. Agents dynamically select endpoints, retry failed requests with backoff, and merge responses while maintaining consistency and preserving call quotas. Distributed consistency models in memory underpin the coherence of federated data views over time.

These examples illustrate that autonomous AI agents leverage the synergy of advanced planning, secure tool use, and coherent memory to deliver scalable, adaptive solutions across a spectrum of technical contexts marked by complexity, latency variability, and failure modes.

Leveraging Open Source Frameworks and Community Resources

The accelerating evolution of autonomous AI agents benefits from a vibrant open source ecosystem offering robust frameworks and tooling that streamline common challenges and speed development cycles.

Frameworks tailored for LLM-powered agents—such as LangChain, AutoGPT, and Microsoft Semantic Kernel—abstract core patterns including modular tool integration pipelines, persistent memory backends, and incremental planning. They operationalize chaining diverse API calls, managing contextual states, and refining plans via reusable building blocks. LangChain, for example, enables prompt template composition, flexible state storage (vector DBs, relational stores), and tool wrappers, simplifying integration across varied APIs. See the LangChain documentation for architecture details.

Conversational AI platforms like Rasa and Botpress have evolved to include persistent context retention and decision-tree planning suitable for multi-turn workflows extending beyond simple chatbot interactions. Middleware ecosystems such as OpenBridge and AsyncAPI scaffold scalable, secure API connectivity supporting distributed multi-agent deployments, crucial for fault tolerance and access control in complex environments.

Community best practices emphasize immutable logs for auditing, lifecycle management of memory, and explicit error handling during multi-tool invocation using circuit breakers, controlled retries, and fallbacks embedded within planners. Reusable plugins and adapters enable rapid domain adaptation while preserving transparency and maintainability. Examples include connectors for Salesforce, QuickBooks, or HL7 APIs, facilitating financial or healthcare agent development without reimplementing integration layers—though they impose vigilance against dependency bloat or security risks.

Typical engineering patterns combine off-the-shelf vector memory stores (Pinecone, Weaviate) with custom API connectors encapsulating domain-specific logic. This hybrid model balances flexibility and scalability, trading off increased latency from rich contextual embeddings against the risk of losing critical context if memory is too sparse.

Selecting frameworks involves trade-offs: balancing latency, tooling extensibility, community activity, and security maturity. Established projects tend to offer better support and documentation but may lack domain-specific features; niche tools might provide optimization yet suffer from smaller ecosystems. Transitioning from experimental setups to production demands rigorous validation, security audits, and performance testing beyond framework defaults.

Leveraging open source accelerates autonomous AI agent development yet carries an engineering responsibility for rigorous vetting and integration soundness, ensuring dependable operation within heterogeneous, mission-critical infrastructures. This foundation frames deeper discussions on architectural design patterns supporting resilient, production-grade agent deployments.

Key Takeaways

- Autonomous AI agents embody systems capable of self-directed task execution through tightly integrated decision-making, external tool invocation, and persistent memory. Engineering these agents requires thoughtful coordination of reasoning workflows, API integrations, and state management to balance responsiveness, reliability, and scalability in complex, dynamic environments.

- Modular architecture separating reasoning, memory, and tool integration enables independent scaling, improved fault isolation, and maintainability, reducing monolith-induced complexity.

- Symbolic and LLM-driven reasoning pipelines present divergent trade-offs in interpretability, adaptability, and operational cost. Hybrid models combining rule-based rigor with neural flexibility yield balanced solutions for complex applications.

- Persistent memory architectures underpin multi-step workflows and context retention, balancing latency, consistency models, and complexity of overwrite or pruning strategies that materially impact agent coherence.

- Secure, standardized tool and API integration protocols enforce interface contracts and sandbox execution, mitigating risks of unexpected behavior or vulnerabilities—especially vital when using third-party or open source tools.

- Human-in-the-loop mechanisms complement autonomy, providing manual oversight and corrective checkpoints that safeguard system reliability, particularly in high-stakes or regulatory contexts.

- Observability and failure management are crucial for monitoring task success, automating retries, detecting anomalies, and handling exceptions across reasoning, memory, and tool execution components.

- Scalability considerations demand concurrent workflow management, distributed state synchronization, and granular resource allocation to handle memory contention and API rate limits effectively.

- Open source frameworks accelerate development but introduce security and compatibility considerations requiring thorough vetting, versioning discipline, and adherence to engineering best practices to avoid technical debt or system compromise.

Together, these principles guide engineers in architecting autonomous AI agents capable of reliable, interpretable, and scalable tool-augmented reasoning and memory across diverse operational domains. Subsequent sections will explore concrete implementations and practical strategies that realize these architectural ideals in production.

Conclusion

Autonomous AI agents mark a fundamental shift from static automation toward resilient, context-aware systems capable of sustained environment interaction, dynamic reasoning, and complex task orchestration. Successfully engineering these agents demands sophisticated integration of continuous perception, hybrid reasoning frameworks—encompassing symbolic, LLM-powered, or blended approaches—and robust, persistent memory mechanisms.

Equally pivotal are secure, scalable external tool integrations and fault-tolerant architectures that anticipate inevitable API and network uncertainties inherent in distributed deployments. Embedding human oversight and end-to-end observability further anchors operational safety and trust, balancing autonomy with control.

As these agents scale across increasingly complex, distributed infrastructures and diverse use cases, the engineering imperative transitions from solving isolated technical challenges toward holistic architectural stewardship—ensuring reliability, maintainability, transparency, and correctness under pressure. Future innovation hinges on exposing architectural trade-offs clearly through monitoring, enabling automated recovery, and evolving hybrid cognitive workflows that gracefully adapt to shifting operational realities.

The pressing design question facing engineers is not if autonomous agents will encounter complexity or failure at scale, but whether system architectures empower visibility, testability, and resilience—qualities essential for dependable AI augmentation of critical workflows in increasingly demanding environments.